Three seconds. That’s all a scammer needs to steal your voice and use it to drain your bank account.

Artificial intelligence has transformed from science fiction into daily reality—and criminals have been paying attention. In 2026, AI-powered scams have reached a level of sophistication that makes them nearly impossible to detect without knowing exactly what to look for. The numbers tell a chilling story: deepfake fraud surged 700% in early 2025, romance scam losses topped $1.3 billion in 2024, and financial losses from AI scams are projected to hit $40 billion by 2027 in America alone.

This isn’t your grandmother’s email scam with broken English and obvious red flags. Today’s AI scammers can replicate your daughter’s voice perfectly, create video calls with “executives” that look completely real, and craft personalized messages that bypass even expert detection. But here’s the crucial part: you can protect yourself. This guide will show you exactly how these scams work and the practical steps you can take today to stay safe.

The Voice Clone: When Mom’s Emergency Call Isn’t Mom

How It Works

Sharon Brightwell’s nightmare began with a phone call that sounded exactly like her daughter—crying, desperate, claiming she’d been in a horrific car accident and lost her unborn baby. The voice begged for $15,000 to pay for a lawyer and avoid jail time. Sharon, overwhelmed by the raw emotion in her “daughter’s” voice, sent the money immediately.

Only it wasn’t her daughter. It was an AI voice clone, generated from a few seconds of audio scraped from social media.

According to recent data, 70% of people surveyed admit they cannot tell the difference between a cloned voice and the real thing. McAfee’s research team demonstrated that with just three seconds of audio—easily harvested from a Facebook video, TikTok post, or Instagram story—AI tools can create a voice clone with 85% accuracy. And the technology is getting better every day, with some tools now achieving nearly 90% accuracy.

Real Victims, Real Losses

The “grandparent scam” has existed for years, but AI has supercharged it into something far more dangerous. In Dover, Florida, scammers used voice cloning to convince a woman her great-grandson had been arrested after a car accident. The voice was perfect—the panic, the desperation, even the way he said “Mawmaw.” They eventually realized it was a scam, but only after considerable distress.

A Michigan woman named Beth Hyland lost $26,000 to a romance scammer who used AI-generated voices and deepfake video on Skype calls. A Colorado mother wired $2,000 to scammers who perfectly cloned her adult daughter’s voice, complete with crying and panic. The emotional manipulation is the weapon—when you believe your loved one is in danger, logic shuts down. WEF_Unmasking_Cybercrime_Strengthening_Digital_Identity_Verification_against_Deepfakes_2026WEF_Unmasking_Cybercrime_Strengthening_Digital_Identity_Verification_against_Deepfakes_2026.pdf1 MB.a{fill:none;stroke:currentColor;stroke-linecap:round;stroke-linejoin:round;stroke-width:1.5px;}download-circle

The Safe Word Solution

Security experts universally recommend one simple defense: establish a family safe word or 4-digit code that you never share online. This low-tech solution defeats even the most sophisticated AI clone because the system can only say what the scammer types—it can’t know information that has never been posted publicly.

According to the National Cybersecurity Alliance, every family should agree on this secret verification method today. It should be:

- Easy to remember but completely random (not a birthday, anniversary, or common phrase)- Shared only in person, never via text or email- Different from your passwords- Something you practice asking for in hypothetical emergency scenarios

If someone calls claiming to be a family member in distress, simply ask for the code word. No exceptions. A real emergency can wait 30 seconds for verification. A scam cannot.

The Deepfake Deception: When Seeing Is No Longer Believing

The $25.6 Million Video Call

In 2024, employees at a multinational company joined what appeared to be a normal video conference call with their CFO and other executives. The CFO instructed an employee to transfer $25.6 million for an urgent acquisition. The employee complied—after all, they could see the CFO on video, hear his voice, and other familiar colleagues were present in the meeting.

Every person on that call was a deepfake.

This incident represents the new frontier of AI scams: real-time deepfake video calls that are virtually indistinguishable from reality. Scammers used AI to clone the faces, voices, and mannerisms of company executives, creating a completely fabricated video meeting.

Romance Scams 2.0

Dating apps have become hunting grounds for AI-powered romance scammers. In Hong Kong, police recently arrested 27 people for using AI face-swapping technology to create fake personas on dating platforms in real-time. When victims requested video calls to verify their matches, scammers used deepfake technology to transform into attractive, realistic potential partners during live conversations.

A Los Angeles woman lost $80,000 to a scammer impersonating “General Hospital” actor Steve Burton, complete with AI-generated videos and a fake marriage proposal. A French woman reportedly lost $850,000 over 18 months to criminals posing as Brad Pitt using AI-generated images. These aren’t isolated incidents—they’re part of a coordinated, global operation using AI tools that anyone can access.

Experian’s 2026 fraud forecast warns that AI-powered romance scams will “respond convincingly, build trust over time, and manipulate victims with precision and emotion,” making them “harder to distinguish from real people.” WEF_Cybercrime_Atlas_Impact_Report_2025WEF_Cybercrime_Atlas_Impact_Report_2025.pdf2 MB.a{fill:none;stroke:currentColor;stroke-linecap:round;stroke-linejoin:round;stroke-width:1.5px;}download-circle

How to Spot a Deepfake

While deepfake technology is improving rapidly, current generation tools still have telltale signs:

Visual red flags:

- Unnatural or jerky movements when the person turns their head- Inconsistent lighting or shadows, especially around the face- Strange blinking patterns (too frequent, too rare, or none at all)- Unusual reflections in eyes or glasses- Difficulty tracking hand movements near the face- Background inconsistencies or blurring

Audio red flags:

- Slight delays between lip movements and speech- Unnatural phrasing or word choices- Background noise that doesn’t match the visual environment- Audio quality that seems disconnected from video quality

Behavioral red flags:

- Person keeps their head very still during the entire conversation- Avoids turning to the side or showing profile views- Won’t perform simple verification actions (wave, hold up fingers, write something specific)- Conversation feels scripted or the person avoids spontaneous questions

Protection strategy: If you receive a video call requesting money, sensitive information, or urgent action—even if it looks like someone you know—end the call immediately. Contact the person through a different, verified method (call their known phone number directly, don’t use any contact information provided during the suspicious call). Ask them a specific question only they would know. Trust is essential, but verification is mandatory in the age of deepfakes.

The Perfect Phishing Email: Grammar-Checked by AI

The Death of Obvious Scams

Remember when phishing emails were easy to spot? The “Nigerian prince” with terrible grammar, the obviously fake bank letter, the message riddled with spelling errors? Those days are gone.

Generative AI tools like ChatGPT (and their criminal counterparts like FraudGPT, WormGPT, and DarkBARD) can now craft emails that are linguistically perfect, emotionally manipulative, and personally tailored to you specifically. AI analyzes your social media profiles, LinkedIn connections, recent posts, and publicly available information to create “spear phishing” messages that reference real details about your life.

Real-World Example

Imagine receiving an email that:

- Uses your actual job title and company name- References a real project you recently posted about on LinkedIn- Mentions your boss by name and mimics their communication style- Contains perfect grammar and professional formatting- Includes an urgent request related to your actual work responsibilities- Links to a website that looks identical to your company’s internal systems

That’s AI-powered phishing. The sophistication level has reached the point where even cybersecurity professionals can be fooled without careful verification.

According to IBM and Red Hat’s research, the financial sector was the second-most targeted industry in 2024, accounting for 23% of reported incidents—up from 18% the previous year. The attacks are increasing in both volume and sophistication, with AI enabling criminals to launch thousands of highly personalized attacks simultaneously.

Protection Tactics

Never trust urgency. Scammers create artificial time pressure to prevent you from thinking critically. Legitimate businesses and organizations rarely require immediate action without verification opportunities.

The verification triangle:

- Receive suspicious request2. Do not click any links or use any contact information in the message3. Contact the organization or person directly using contact information you find independently

Enable two-factor authentication (2FA) on all accounts. This adds a critical second layer of security. Even if scammers obtain your password through AI-assisted attacks, they cannot access your account without the second verification step.

Use password managers and unique passwords for every account. AI can now check billions of passwords per second, learning from patterns in leaked password databases. Reusing passwords across multiple sites creates a cascading vulnerability.

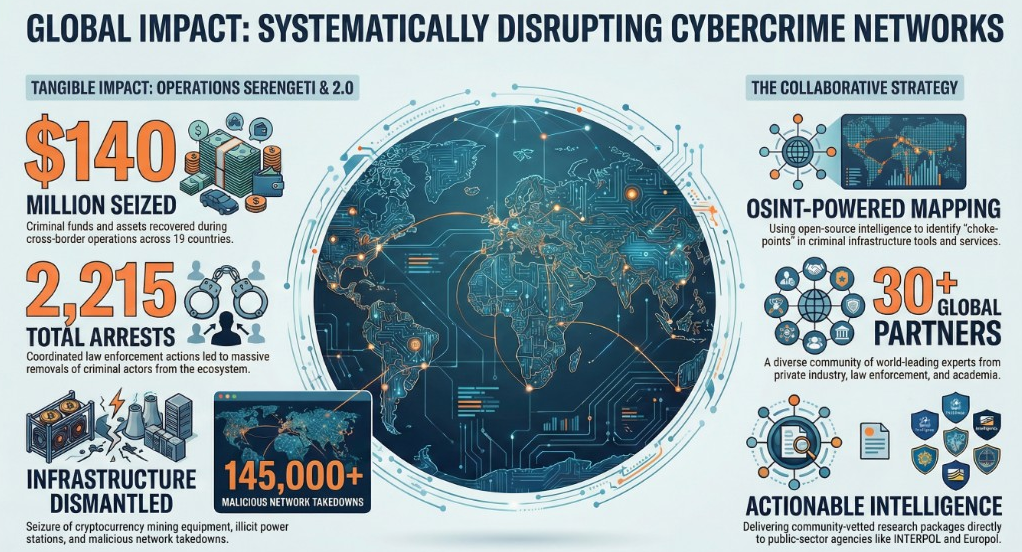

https://initiatives.weforum.org/cybercrime-atlas/home

The Business Email Compromise: When Your CEO’s Voice Isn’t Real

AI-powered business scams are targeting companies at an alarming rate. The CEO of WPP, a major UK corporation, was recently targeted by scammers who cloned his voice for use on a fake Teams-style video call. Ferrari executives received WhatsApp voice messages from someone impersonating the Ferrari CEO requesting urgent supplier payments.

These aren’t random attacks—they’re carefully researched operations targeting specific companies and individuals. Scammers study organizational charts, communication patterns, and public information to craft convincing impersonations of executives.

For businesses: Implement mandatory verification protocols for any financial transfers or sensitive data requests, regardless of apparent authority. Create internal policies that require multi-person approval for significant transactions. Train all employees on voice clone and deepfake recognition.

AI Investment Scams: Celebrity Deepfakes Promoting Crypto

Deepfake videos of Elon Musk, prominent investors, and other celebrities are circulating across YouTube, X (Twitter), and Facebook, promoting fraudulent cryptocurrency schemes and “guaranteed return” investments. These videos are professionally produced, feature realistic facial movements, and often include convincing backgrounds and contexts.

Joseph Ramsubhag, a retired nurse, lost hundreds of thousands of dollars after seeing a deepfaked Elon Musk promoting a cryptocurrency investment. The scammer continuously updated him about his “growing wealth,” encouraging him to invest more. When he tried to withdraw his money, he discovered it was all gone.

Protection: No legitimate investment opportunity requires immediate action or comes from unsolicited contact. Research any investment through official channels. Verify celebrity endorsements on their verified social media accounts (look for the checkmark). If returns sound too good to be true, they are.

Your Defense Plan: Practical Steps You Can Take Today

Immediate Actions (Next 30 Minutes)

- Create your family safe word and share it in person with close family members2. Enable 2FA on your bank accounts, email, and social media3. Review your social media privacy settings to limit who can see videos and audio of you4. Check your financial account activity for any unusual transactions

This Week

- Install and update antivirus software with AI-scam detection capabilities2. Review what personal information is publicly available about you online3. Talk to elderly family members about these scams—seniors lost $3.4 billion to various scams in 20234. Set up account alerts with your bank to notify you of suspicious activity5. Consider a credit freeze if you’re concerned about synthetic identity fraud

Ongoing Vigilance

The “Manual Redial” rule: If you receive a suspicious call claiming to be from a family member, hang up immediately. Do not use the redial button (which might connect you back to the scammer). Instead, manually dial the person’s number from your saved contacts. If they’re safe at home, you’ve confirmed it was a scam.

Question everything urgent: Legitimate emergencies allow time for verification. Scam emergencies demand immediate action specifically to prevent verification.

Verify video calls: If someone makes an unusual request during a video call, ask them to perform a simple action: wave in a specific way, hold up a certain number of fingers, write something on paper and show it to the camera. Deepfakes struggle with real-time, specific requests.

Trust your instincts: If something feels wrong—even if you can’t identify exactly why—pause and verify. Your brain often detects subtle inconsistencies before your conscious mind can articulate them.

Digital Wealth ShieldComprehensive security for high net worth individualsDigital Wealth Shield

The Bottom Line: Knowledge Is Your Best Defense

AI scams are sophisticated, convincing, and increasing at an exponential rate. But they’re not unstoppable. The criminals behind these scams rely on three things: urgency (preventing you from thinking), emotion (overriding your logic), and ignorance (you not knowing these techniques exist).

By reading this article, you’ve eliminated the ignorance factor. By implementing the safe word system and verification protocols, you’ve neutered their urgency tactics. By understanding how the emotional manipulation works, you can recognize and resist it when it happens.

Share this information with your family, especially elderly relatives who are disproportionately targeted. Have the awkward conversation about the family safe word. Practice asking for verification. Make it weird to send money without multiple confirmations.

In 2026, your voice is data, your face is data, and your trust is a vulnerability. But with awareness, verification protocols, and healthy skepticism, you can protect yourself from even the most sophisticated AI-powered scams. The technology may be new, but the defense is timeless: trust, but always verify.

Have you encountered an AI-powered scam? Report it to:

- Federal Trade Commission: reportfraud.ftc.gov- FBI Internet Crime Complaint Center: ic3.gov- Your state attorney general’s consumer protection division

Additional Resources:

- McAfee AI Hub: Information on AI voice cloning and deepfakes- Identity Theft Resource Center: Free support for scam victims- AARP Fraud Watch Network: Resources specifically for seniors

Remember: It’s not paranoia if they’re really using AI to clone your voice. Stay informed, stay skeptical, and stay safe.